Architecture

Alauda Distributed Tracing is based on Jaeger v2 and Alauda Build of OpenTelemetry v2. Jaeger instances are deployed through the OpenTelemetry Operator, and Elasticsearch is used as the backend storage. In this architecture, Jaeger v2 provides the core tracing backend capabilities for data ingestion, query, and visualization.

Jaeger v2 is designed to be a versatile and flexible tracing platform. It can be deployed as a single binary that can be configured to perform different roles within the Jaeger architecture.

TOC

RolesStorage architectureDirect to storageWith OpenTelemetry CollectorOpenTelemetry Collector as a sidecar / host agentOpenTelemetry Collector as a remote clusterJaeger BinaryJaeger ComponentsOpenTelemetry ComponentsReceiversProcessorsExportersConnectorsExtensionsRoles

- collector: Receives incoming trace data from applications and writes it into a storage backend.

- query: Serves the APIs and the user interface for querying and visualizing traces.

- es-rollover: Manages Elasticsearch rollover-based index operations for Jaeger. It is used to prepare aliases, indices, and templates for rollover deployments, and can periodically roll the write alias to a new index while updating read aliases.

Storage architecture

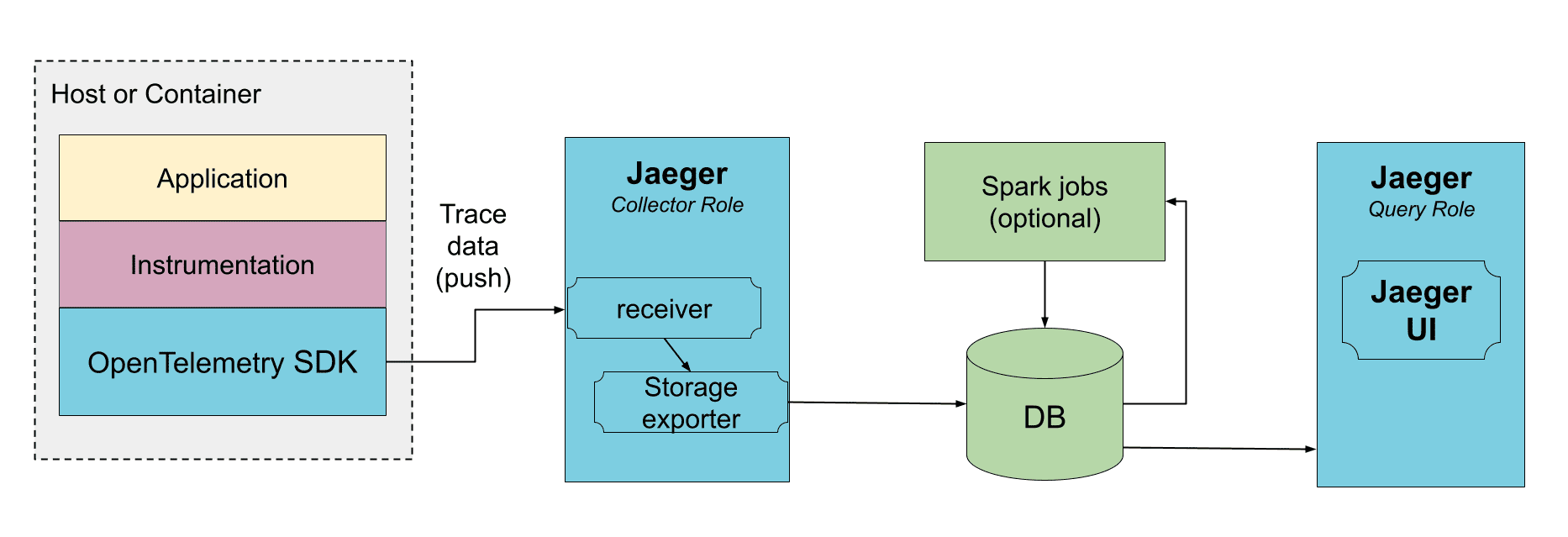

Direct to storage

In this deployment the collectors receive the data from traced applications and write it directly to storage. The storage must be able to handle both average and peak traffic. The collectors may use an in-memory queue to smooth short-term traffic peaks, but a sustained traffic spike may result in dropped data if the storage is not able to keep up.

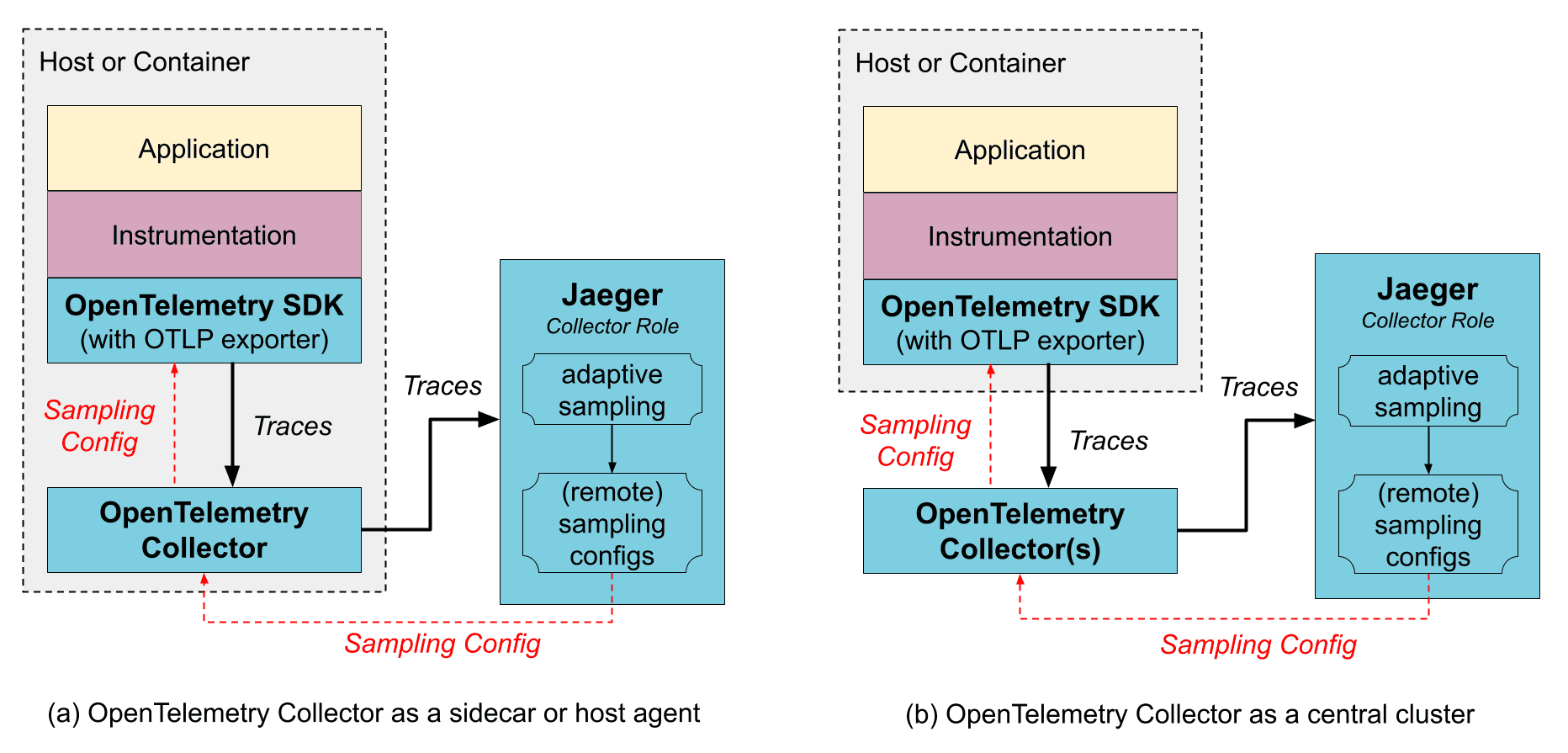

With OpenTelemetry Collector

You do not need to use the OpenTelemetry Collector to operate Jaeger, because Jaeger is a customized distribution of the OpenTelemetry Collector with different roles. However, if you already use the OpenTelemetry Collectors, for gathering other types of telemetry or for pre-processing / enriching the tracing data, it can be placed in front of Jaeger in the collection pipeline. The OpenTelemetry Collectors can be run as an application sidecar, or as a remote service cluster.

The OpenTelemetry Collector supports Jaeger's Remote Sampling protocol and can either serve static configurations from config files directly, or proxy the requests to the Jaeger backend (e.g., when using adaptive sampling).

OpenTelemetry Collector as a sidecar / host agent

Benefits:

- The SDK configuration is simplified as both trace export endpoint and sampling config endpoint can point to a local host and not worry about discovering where those services run remotely.

- Collector may provide data enrichment by adding environment information, like k8s pod name.

- Resource usage for data enrichment can be distributed across all application hosts.

Downsides:

- An extra layer of marshaling/unmarshaling the data.

OpenTelemetry Collector as a remote cluster

Benefits:

- Sharding capabilities, e.g., when using tail-based sampling.

Downsides:

- An extra layer of marshaling/unmarshaling the data.

Jaeger Binary

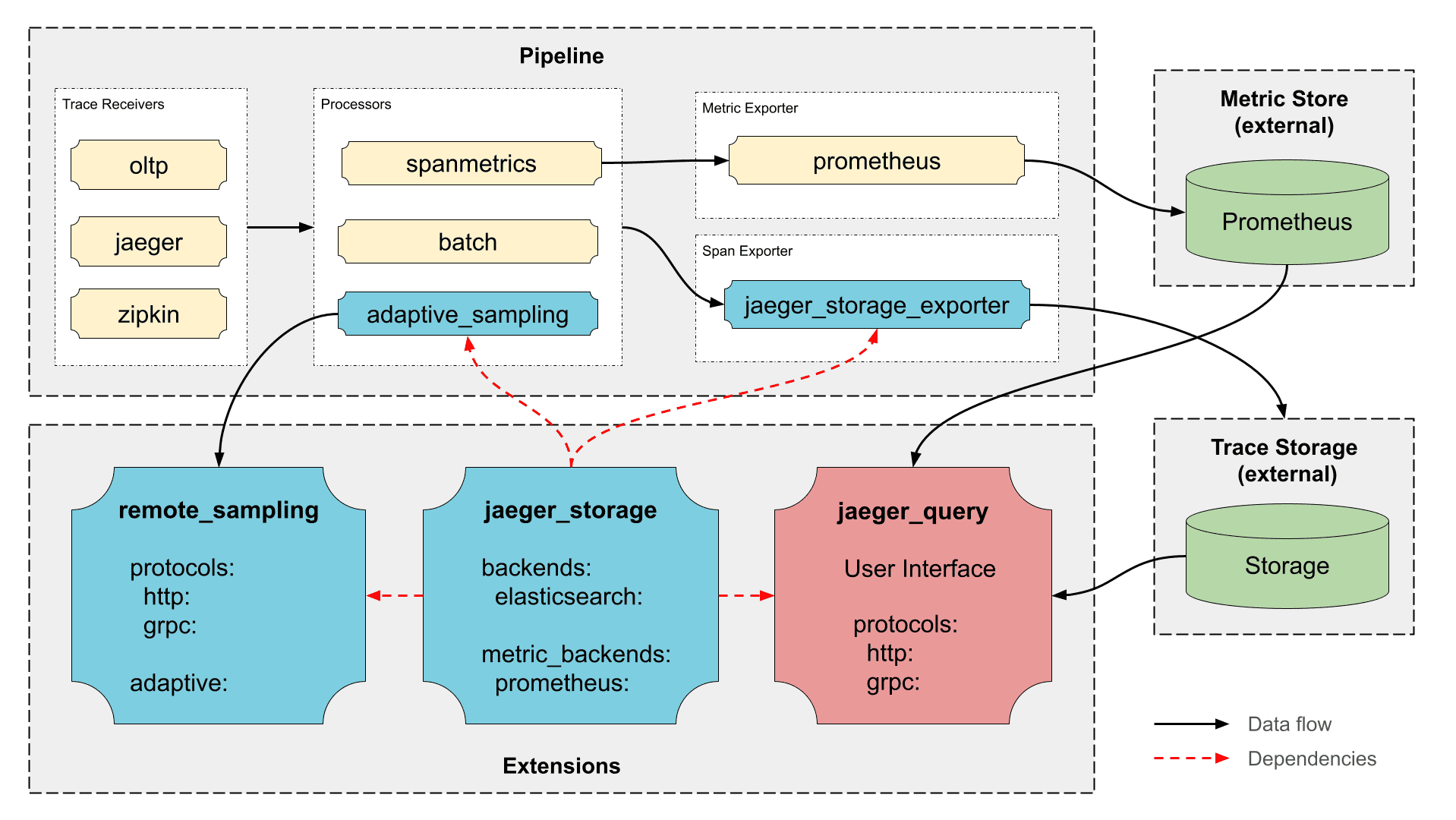

The Jaeger binary is build on top of the OpenTelemetry Collector framework and includes:

- Official upstream components, such as OTLP Receiver, Batch and Attribute Processor, etc.

- Upstream components from

opentelemetry-collector-contrib, such as Kafka Exporter and Receiver, Tail Sampling Processor, etc. - Jaeger own components, such as Jaeger Storage Exporter, Jaeger Query Extension, etc.

Jaeger Components

- Jaeger Storage Extension - Extensible hub for storage backends supported in Jaeger. It provides all other Jaeger components access to Jaeger storage implementations.

- Jaeger Storage Exporter - Writes spans to storage backend configured in the Jaeger Storage Extension.

- Jaeger Query Extension - Run the query APIs and the Jaeger UI.

- Adaptive Sampling Processor - Performs probabilities calculations for adaptive sampling.

- Remote Sampling Extension - Serves the endpoints for Remote Sampling, based on static configuration file or adaptive sampling.

OpenTelemetry Components

Receivers

- OTLP - Accepts spans sent via OpenTelemetry Protocol (OTLP).

- Jaeger - Accepts Jaeger formatted traces transported via gRPC or Thrift protocols.

- Kafka - Accepts spans from Kafka in various formats (OTLP, Jaeger, Zipkin).

- Zipkin - Accepts spans using Zipkin v1 and v2 protocols.

- No-op - Used for Jaeger UI / query service deployment that does not require an ingestion pipeline.

Processors

- Batch Processor - Batches spans for better efficiency.

- Tail Sampling - Supports advanced post-collection sampling.

- Memory Limiter - Supports back-pressure when the collector is overloaded.

- Attributes Processor - Allows filtering, rewriting, and enriching spans with attributes. Can be used to redact sensitive data, reduce data volume, or attach environment information.

- Filter Processor - Allows dropping spans and span events from the collector (⚠️ may cause broken traces).

Exporters

- OTLP - Send data in OTLP format via gRPC.

- OTLP HTTP - Sends data in OTLP format over HTTP.

- Kafka - Sends data to Kafka in various formats (OTLP, Jaeger, Zipkin).

- Prometheus - Sends metrics to Prometheus.

- Debug - Debugging tool for pipelines.

- No-op - Used for Jaeger UI / query service deployment that does not require an ingestion pipeline.

Connectors

- Span Metrics - Generates metrics from span data.

- Forward - Redirects telemetry between pipelines in the collector (ex: span to metric / span to log)

Extensions

- Health Check v2 - Supports health checks.

- zPages - Exposes internal state of the collector for debugging.

- Performance Profiler (pprof) - enables Go's

net/http/pprofendpoint, typically used by developers to collect performance profiles and investigate issues with the collector.